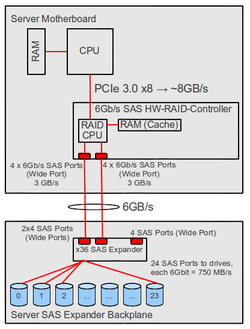

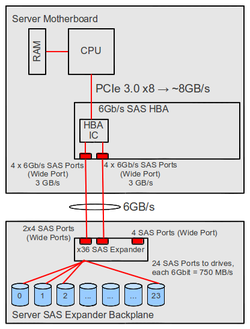

Differences between hardware RAID and Linux software RAID

For Linux, the question often arises if a hardware RAID Controller or Linux Software RAID should be used for the storage subnet. This article shows the differences between hardware RAID and Linux software RAID.

Comparison table

| HW RAID | SW RAID | |

| Controller |

HW RAID Controller |

SATA ports of chipset or SAS HBA |

| Setup |

|

|

| RAID-calculations | in HW-RAID-chip | in CPU |

| Writing |

|

|

| Reading |

|

|

| Supported operating systems |

dependent on RAID controller, mostly usually an extensive list of operating systems:

|

|

| SSD support |

|

|

| Booting from RAID |

|

References

- ↑ RAID - RAID 5: performance + parity , Block-Level Striping with distributed parity information (de.wikipedia.org)

- ↑ Standard RAID levels - RAID 5 parity handling (en.wikipedia.org)

- ↑ Linux software RAID: RAID 5 vs RAID 10 performance and other RAID levels (ilsistemista.net, 08.07.2010)

- ↑ Intro to Nested-RAID: RAID-01 and RAID-10 (linux-mag.com, 06.01.2011)

- ↑ RAID10 in Linux MD driver (Neil Brown, Maintainer mdraid, 27.08.2004)

- ↑ Non-standard RAID levels - Linux MD RAID 10 (en.wikipedia.org)

- ↑ GNU GRUB Manual 2.00: Device syntax (www.gnu.org)

- ↑ GNU GRUB Manual 2.00: Environment block (www.gnu.org)

|

Author: Werner Fischer Werner Fischer, working in the Knowledge Transfer team at Thomas-Krenn, completed his studies of Computer and Media Security at FH Hagenberg in Austria. He is a regular speaker at many conferences like LinuxTag, OSMC, OSDC, LinuxCon, and author for various IT magazines. In his spare time he enjoys playing the piano and training for a good result at the annual Linz marathon relay.

|

|

Translator: Alina Ranzinger Alina has been working at Thomas-Krenn.AG since 2024. After her training as multilingual business assistant, she got her job as assistant of the Product Management and is responsible for the translation of texts and for the organisation of the department.

|