Ceph

Ceph is a highly available, distributed, and robust file system. It was introduced in 2007 by Sage A. Weil as part of his dissertation at the University of California, Santa Cruz.[1] Sage A. Weil was also the founder of Inktank Storage, a company that has been primarily responsible for the development of Ceph since 2011 and was acquired by Red Hat in 2014. [2]

Ceph as distributed file system

Ceph has the following characteristics:

- scalable, especially in width

- usable with Commodity Hardware

- flexible and self-administrated

- Object Store - RADOS (Reliable Autonomic Distributed Object Store)

- no Single Point of Failure - distribution onto multiple nodes

- software based and Open Source

- unified storage

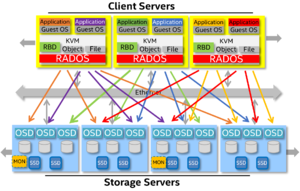

Ceph cluster

Ceph is a distributed file system over multiple nodes. This is why it is also referred to as a Ceph cluster. There are always multiple roles in a Ceph cluster, which are taken over by individual nodes.

Monitors

Ceph monitors or monitoring nodes build a so-called cluster map. They are responsible to generate and administrate a uniform cluster status. A Ceph monitor is a daemon or a system-service that is responsible for the status and configuration information.

A Ceph cluster typically runs an odd number of monitors, with smaller clusters running 3 or 5. At least half of this number must be running. Otherwise, the cluster loses its defined condition and is inaccessible for clients.[3]

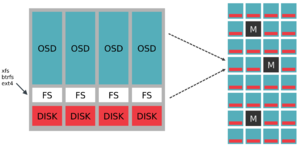

Object Storage node

An Object Storage node is the essential storage component in the storage cluster. It realizes an Object Storage Device (OSD) and connects it with the cluster. AN OSD consists of:

The OSD daemon is a service that supplies the clients with the stored objects. OSDs can either be primary or secondary. Primary OSDs are responsible for:

- replication, coherence, re-balancing and recovery

Secondary OSD:

- are under control of the primary, but can also become primary themselves.

Write requests of clients (Client initiated Writes) are always accepted by primary OSD.[4] The intelligent communication between OSDs to respond to client requests is also referred to as "peering".

OSD journal

By default, Ceph stores a journal on the same storage device as each OSD.[5]

Before data is finally written to the OSDs, it always goes to the journal first. In addition, the replicas are distributed to other nodes in compliance with the configuration. The client only receives its ACK (confirmation, that operation has been completed) for the data-operation if the replicas of its data was created. However, it is enough if the data is located in the jounals of the other nodes. Ceph then gradually writes the data from the journals to the OSDs. This procedure gives an idea of how important the performance of the journals is for processing client requests in the Ceph cluster. Red Hat writes about this theme in a Ceph hardware guide: [6]

Since Ceph has to write all data to the journal before it can send an ACK (for XFS and EXT4 at least), having the journal and OSD performance in balance is really important!

The journals are often outsourced on SSDs to increase the performance. In this setup, multiple journals from different OSDs are often placed on a single SSD (no more than 4-5 journals per SSD are recommended). Please note the following points:

- If the SSD fails and with it the journals stored on it, the associated OSDs will also no longer be available. For example, if all the journals of a Ceph node are stored on only one SSD and this SSD fails, all OSDs of the node will be unavailable!

- SSDs with journals of multiple OSDs are based on high writing load. Take a look on the endurance of the SSD. It is best to monitor the current status of the SSD with SMART and Icinga with the SMART Attributes Monitoring Plugin.

- If multiple journals are stored on a single SSD, both the speed of random accesses ("random I/O") and the throughput ("sequential I/O") are crucial. When purchasing an SSD from a specific SSD series, be sure to choose a model with at least medium or preferably high capacity. SSD models with higher capacity typically have more flash chips that can be parallely used by the SSD controller. Therefore, SSD with higher capacity have a higher performance (for example when writing on Intel DC S3610 Series SSDs 520MB/s for the 800GB model compared to 110MB/s for the 100GB model).

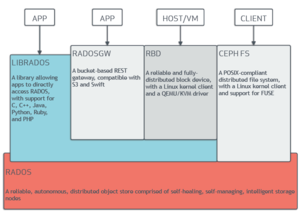

Access paths or Ceph interfaces

A Ceph storage can be accessed in different ways.

radosgw

A RADOS or Ceph gateway is a REST based web service gateway. The RESTful API is Amazon S3 and OpenStack Swift compatible.

RADOS block device

The RADOS block device (RBD) or Ceph block device is a provided and distributed block device. It communicates with Ceph either via Linux kernel module or librbd library. The clients work with a virtual block device that is mapped from the Ceph Object Store.

CephFS

CephFS is a POSIX compliant, via Ceph cluster distributed file system.[7] CephFS forces at least a Ceph metadata server (MDS) in the cluster.

librados

The library/API librados is a native interface for the direct access to RADOS. Applications are developed with librados that want to access RADOS directly. The above mentioned interfaces (radosgw, rbd, CephFS) relies on the c variant of librados themselves.[8] There are also bindings for C++, Java, Python, Ruby and PHP.

Distribution of data

Ceph's distribution of data across the cluster is a unique feature developed by Sage A. Weil. The core of the algorithm is a CRUSH map, which is a mechanism to distribute data.

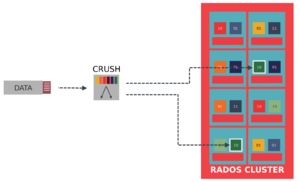

CRUSH Map

CRUSH is the abbreviation for Controlled Replication under scalable Hashing. CRUSH is described as Data Placement Algorithm in English, which is an algorythm to distribute data on the OSDs. Unlike lookup variants from other file systems, CRUSH relies on a pseudo-random, deterministic calculation when placing objects.

Sage A. Weil writes on page 84 about CRUSH:[1]

I have developed CRUSH (Controlled Replication Under Scalable Hashing), a pseudo-random data distribution algorithm that efficiently and robustly distributes object replicas across a heterogeneous, structured storage cluster. CRUSH is implemented as a deterministic function that maps an input value - typically an object or object group identifier—to a list of devices on which to store object replicas.

CRUSH needs a compact, internal description of available OSDs and the Replica Placement Policy for efficient distribution and the Replica Placement Policy (how many replicas should be kept in the cluster). The advantage is that CRUSH is deterministic and that every node in the cluster and every client can calculate the current location of objects. Furthermore, the medadata effort is low for CRUSH, as they only change if OSDs are added or removed.

Another caracteristic of CRUSH is that it can be configured using rules. For example:

- Replica Count

- Affinity and distribution rules

- weightings

can be adjusted.

Sage A. Weil further describes CRUSH's calculations as follows:

Given a single integer input value x, CRUSH will output an ordered list R of n distinct storage targets. CRUSH utilizes a strong multi-input integer hash function whose inputs include x, making the mapping completely deterministic and independently calculable using only the cluster map, placement rules, and x.

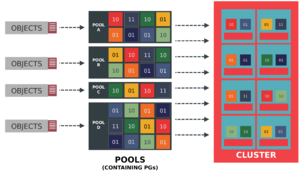

Placement groups and pools

Placement Groups (PGs) and pools are logical constructs that are used to administrate objects. They serve as abstraction and grouping.

Placement groups

PGs are logical groupings of objects that are assigned to OSDs. The assignment of Object <-> PG is determined by:

- the hash of the object-name

- the number of replicas

- the total number of PGs

The resulting PG is then incorporated into the CRUSH algorithm.

In the next step, the PGs are assigned to the OSDs. The distribution

- is independent of the PG, pseudo-randomly mapped to a set of OSDs

- of PGs, which are mapped to the same OSDs, has replicas in other OSDs

PGs offer the advantage that operations and algorithms do not have to work on object-level. However, this would be quite unrealistic and inefficient for but-million objects.

Pools

Ceph pools are logical partitions for storing objects. Pools consists of a defined number of PGs. Pools are also used for the configuration of

- the number of replicas

- the number of PGs

- the used CRUSH rules

RADOS

RADOS (Reliable Autonomic Distributed Object Store) is the actual object store of Ceph, in which objects are filed.

Sage A. Weil describes RADOS at the beginning of his dissertation as follows:[1]

[...] RADOS, Ceph’s Reliable Autonomic Distributed Object Store. RADOS provides an extremely scalable storage cluster management platform that exposes a simple object storage abstraction. System components utilizing the object store can expect scalable, reliable, and high-performance access to objects without concerning themselves with the details of replication, data redistribution (when cluster membership expands or contracts), failure detection, or failure recovery.

More information

- Ceph Community presentation channel (de.slideshare.net)

References

- ↑ 1.0 1.1 1.2 1.3 Ceph: Reliable, Scalable, and high-performance distributed storage (ceph.io)

- ↑ Ceph - History (wikipedia.org)

- ↑ Ceph Essentials (storageconference.us)

- ↑ Monitoring OSDs and PGs (ceph.com)

- ↑ Journal Config Reference (ceph.com)

- ↑ Red Hat Ceph Storage 1.2.3 Hardware Guide (access.redhat.com)

- ↑ CephFS (ceph.com)

- ↑ librados introduction (ceph.com)

Author: Georg Schönberger

|

Translator: Alina Ranzinger Alina has been working at Thomas-Krenn.AG since 2024. After her training as multilingual business assistant, she got her job as assistant of the Product Management and is responsible for the translation of texts and for the organisation of the department.

|