GPU Sensor Monitoring Plugin Setup

This article will describe the installation and configuration of the Graphic Processor Unit (GPU) Sensor Monitoring Plugin in Nagios and Icinga. The plugin makes monitoring the NVIDIA GPU Hardware possible and displays detailed status information about the current state of the video cards. In this specific case for example, the temperature, power usage, fan speed and memory usage will be monitored.

Current Version

- Download

- Version 2.3 supported sensors, cf. [2]:

- Clock informations

- Fan speed

- ECC error counters

- Temperature

- Power usage

- Memory usage

- Device utilization

- Persistence mode

- Inforom validation (checksum)

- Throttle reasons

- PCIe link settings

Important Prerequisite Information

Note: After an inquiry at the NVIDIA forum, it has been confirmed that fan speed indicated by the nvidia-smi/nvml cannot be used to conclude whether or not the fan is actually rotating. The percentage indicators show the rotational speed, at which the fan algorithm attempts to operate the fan. Regarding this see the discussion in the NVIDIA-Forum.

As a rule, the temperature sensor functions as an indicator for a functioning fan, for that reason. If the temperature continuously increases without cause, this may indicate a ventilation problem. The fan may be defective for GPUs whose temperatures increase above the specified maximum temperature.

Download

The plugin is available from GitHub: check_gpu_sensor_v1

A copy of the plugin can be downloaded using the command:

git clone git://github.com/thomas-krenn//check_gpu_sensor_v1.gitor:

git clone https://github.com/thomas-krenn/check_gpu_sensor_v1.gitUseful information and tips for the use of Git can be found in the series of article in the Git category.

Installing the check_gpu_sensor Plugin

The following components will be required for the installation and use of the plugin:

- A NVIDIA GPU

- Various sensors will be supported, depending on the model

- NVIDIA Drivers

- Either a proprietary driver (cp. CUDA Installation#Installing NVIDIA Drivers).

- Or the NVIDIA driver from the package repository

- NVML Perl Bindings (https://search.cpan.org/~nvbinding/)

- appropriate to the version of the driver

- Icinga Installation

- Debian: an Icinga 1.6.1 under Debian 6.0 Squeeze

- Ubuntu: an Icinga 1.6.1 under Ubuntu 12.04 Precise

- Icinga NRPE for monitoring remote hosts

- See also Icinga NRPE Plugin

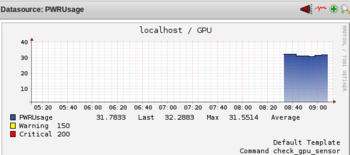

- For displaying the performance data

- The generated performance data can be displayed using graphs (such as the GPU temperature) with PNP4Nagios.

- Debian: Icinga Graphs with PNP under Debian 6.0 Squeeze

- Ubuntu: Icinga Graphs with PNP under Ubuntu 12.04 Precise

NVidia Management Library

The "NVIDIA Management Library" (NVML) is a C-based API for management NVIDIA GPUs.[1] NVML’s runtime library is supplied with the NVIDIA video driver("libnvidia-ml"). The NVML SDK includes the stub libraries, the header files and example applications. Each new version of NVML has remained backwards compatible and helps with the creation of third-party software for the management of NVIDIA GPUs. The functional scope of the NVML library is available predominantly for Tesla series products. The following information can be detected using NVML.[1]

- ECC status information (error counts)

- GPU load (memory & processor)

- Temperature and fan speed

- Active computational processes

- GPU clock rates

- Power management

The Tesla Deployment Kit, which contains all of the required components for NVML, (developer.nvidia.com) can be downloaded for programming in C. The C components are not required, however, for using script bindings or the GPU plugin. The Perl and Python scripting languages are officially supported through bindings (see below). The NVML API Reference Manual will provide additional information about the NVML library.

Supported Operating Systems and GPUs

According to the reference manual, the following operating systems (OSs) and GPUs are supported:[2]

- OS Platforms

- Windows Server 2008 R2 64-bit, Windows 7 64-bit

- Linux 32-bit and 64-bit

- GPUs

- Full Support

- Full Support

- NVIDIA Tesla ™Line: S2050, C2050, C2070, C2075, M2050, M2070, M2075, M2090, X2070, X2090, K10, K20, K20X

- NVIDIA Quadro ®Line: 4000, 5000, 6000, 7000, M2070-Q, 600, 2000, 3000M and 410

- NVIDIA GeForce ®Line: None

- Limited Support

- NVIDIA Tesla ™Line: S1070, C1060, M1060

- NVIDIA Quadro ®Line: All other current and previous generation Quadro-branded parts

- NVIDIA GeForce ®Line: All current and previous generation GeForce-branded parts

So that NVML calls for the plugin can be used, a Perl binding must be installed.

Installing the Perl Binding

A collection of officially supported bindings for Python and Perl are offered from:

They will make programming software with the help of the NVML API possible in Python and Perl.

When installing the Perl bindings, make sure that they are appropriate for the installed version of the driver(s). Version 304.88 from the repo should be used with Ubuntu 12.04.[3]

$ nvidia-smi -a|grep 'Driver Version'

Driver Version : 304.88This driver can be used with the current Perl bindings in the "nvidia-ml-pl-4.304.1" version package.

Note: If the following error message appears during the creation of the make file (such when the proprietary NVIDIA drivers will not be used, but rather those form the repo) :

:~/nvidia-ml$ perl Makefile.PL

Note (probably harmless): No library found for -lnvidia-ml

Writing Makefile for nvidia::ml::bindings

Writing MYMETA.yml and MYMETA.jsonthen the path to the "nvidia-ml" library will have to be modified ("-L/usr/lib/nvidia-current" or "-L/usr/lib/nvidia-experimental-304" (for the experimental driver)):

$ chmod u+w Makefile.PL

$ vi Makefile.PL

use ExtUtils::MakeMaker;

# See lib/ExtUtils/MakeMaker.pm for details of how to influence

# the contents of the Makefile that is written.

WriteMakefile(

NAME => 'nvidia::ml::bindings',

PREREQ_PM => {}, # e.g., Module::Name => 1.1

LIBS => ['-L/usr/lib/nvidia-current -lnvidia-ml'], # e.g., '-lm'

DEFINE => '', # e.g., '-DHAVE_SOMETHING'

INC => '-I.', # e.g., '-I. -I/usr/include/other'

OBJECT => '$(O_FILES)', # link all the C files too

);Afterwards, the Perl bindings will compile without warnings and can be installed.

$ perl Makefile.PL

$ make

$ sudo make installFinally, the following command indicates that the Perl modules have been installed.

$ perldoc perllocal

Mon Apr 22 09:11:08 2013: "Module" nvidia::ml::bindings

· "installed into: /usr/local/share/perl/5.14.2"

· "LINKTYPE: dynamic"

· "VERSION: "

· "EXE_FILES: "Installing the Plugin File

For installing the plugin file, the file, "check_gpu_sensor", must be copied to the Nagios or Icinga Plugins directory and designated as executable.

$ sudo cp check_gpu_sensor /usr/lib/nagios/plugins/

$ sudo chmod +x /usr/lib/nagios/plugins/check_gpu_sensorConfiguring the check_gpu_sensor Plugin

NRPE Configuration

The Icinga NRPE Plugin article may be referred for help with the configuration of NRPE.

On the Monitored Server

So that the monitored host will properly call the GPU plugin, an NRPE configuration file (see also Icinga NRPE Plugin#Nagios/Icinga Client) must be created in the first step. The GPU to be monitored is defined by specifying a PCI device string. This device string may be determined from the nvidia-smi utility.

$ nvidia-smi -a |grep 'Bus Id'

Bus Id : 0000:83:00.0The string just provided will identify the system’s GPU.

$ sudo vi /etc/nagios/nrpe_local.cfg

command[check_gpu_sensor]=/usr/lib/nagios/plugins/check_gpu_sensor -db '0000:83:00.0'The device ID can be used instead of the device string. The disadvantage of using the device ID is that it does not ensure that the same ID will be assigned to the same devices after rebooting. Specifying the PCI string should be preferred over the device ID for that reason.

$ ./check_gpu_sensor -db 0000:83:00.0

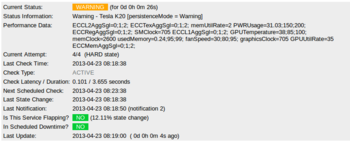

Warning - Tesla K20 [persistenceMode = Warning]|ECCL2AggSgl=0;1;2; ECCTexAggSgl=0;1;2; memUtilRate=1 PWRUsage=31.61;150;200;

ECCRegAggSgl=0;1;2; SMClock=705 ECCL1AggSgl=0;1;2; GPUTemperature=37;85;100; memClock=2600 usedMemory=0.24;95;99; fanSpeed=30;80;95;

graphicsClock=705 GPUUtilRate=27 ECCMemAggSgl=0;1;2;

$ ./check_gpu_sensor -d 0

Warning - Tesla K20 [persistenceMode = Warning]|ECCL2AggSgl=0;1;2; ECCTexAggSgl=0;1;2; memUtilRate=0 PWRUsage=30.36;150;200;

ECCRegAggSgl=0;1;2; SMClock=705 ECCL1AggSgl=0;1;2; GPUTemperature=38;85;100; memClock=2600 usedMemory=0.24;95;99; fanSpeed=30;80;95;

graphicsClock=705 GPUUtilRate=0 ECCMemAggSgl=0;1;2;Finally, the IP address for the Nagios or Icinga server (e.g 10.0.0.3) must still be entered into the "/etc/nagios/nrpe.cfg" file to permit an NRPE connection.

sudo vi /etc/nagios/nrpe.cfg

...

allowed_hosts=127.0.0.1,10.0.0.3

...Once the NRPE server has been restarted, the NRPE configuration will be completed on the monitored host.

$ sudo /etc/init.d/nagios-nrpe-server restartOn Icinga Servers

For Icinga servers, the following will be required:

- an Icinga installation

- and an NRPE plugin (Icinga NRPE Plugin#Icinga Server) for monitoring a remote host

If both components have been successfully installed and configured, a new service-configuration can be created for the GPU host.

:~# vi /usr/local/icinga/etc/objects/gpu-host.cfg

define host{

use linux-server

host_name GpuNode

alias GpuNode

address 10.0.0.1

}

define service{

use generic-service

host_name GpuNode

service_description GPU SENSOR

check_command check_nrpe!check_gpu_sensor

}So that this service will also become active, the path for the service file will be added in the Icinga configuration.

:~# vi /usr/local/icinga/etc/icinga.cfg

[...]

cfg_file=/usr/local/icinga/etc/objects/gpu-host.cfg

[...]Afterwards, the NRPE connection with the GPU sensor plugin can be tested.

OK - Tesla K20 |ECCL2AggSgl=0;1;2; ECCTexAggSgl=0;1;2; memUtilRate=0 PWRUsage=49.81;150;200; ECCRegAggSgl=0;1;2; SMClock=705

ECCL1AggSgl=0;1;2; GPUTemperature=38;85;100; memClock=2600 usedMemory=0.24;95;99; fanSpeed=30;80;95; graphicsClock=705

GPUUtilRate=0 ECCMemAggSgl=0;1;2;References

- ↑ 1.0 1.1 NVIDIA Management Library (NVML) (developer.nvidia.com)

- ↑ [1] (developer.download.nvidia.com)

- ↑ Ubuntu nvidia-current package (packages.ubuntu.com)